How RoboBrain Builds Long-Term Robot Memory with Milvus

This post is contributed by Song Zhi, CEO of Senqi AI, an embodied-AI company building task-execution infrastructure for robots. RoboBrain is one of Senqi AI’s core products.

Most robot capabilities work fine on their own. A navigation model can plan a route. A perception model can identify objects. A speech module can accept instructions. The production failure appears when those capabilities have to run as one continuous task.

For a robot, a simple instruction like “go check that area, photograph anything unusual, and notify me” requires planning before the task starts, adapting while it runs, and producing a useful result when it finishes. Each handoff can break: navigation freezes behind an obstacle, a blurry photo is accepted as final, or the system forgets the exception it handled five minutes ago.

That is the core challenge for AI agents operating in the physical world. Unlike digital agents, robots execute against continuous unstructured data: blocked paths, changing light, battery limits, sensor noise, and operator rules.

RoboBrain is Senqi AI’s embodied-intelligence operating system for robot task execution. It sits at the task layer, connecting perception, planning, execution control, and data feedback so natural-language instructions can become structured, recoverable robot workflows.

| Breakpoint | What Fails in Production | How RoboBrain Closes It |

|---|---|---|

| Task planning | Vague instructions leave downstream modules without concrete execution fields. | Task objectification turns intent into shared state. |

| Context routing | The right information exists, but reaches the wrong decision stage. | Tiered memory routes real-time, short-term, and long-term context separately. |

| Data feedback | A single pass completes or fails without improving the next run. | Feedback writebacks update task state and long-term memory. |

Three Breakpoints in Robot Task Execution

Software tasks can often be bounded as input, process, and result. Robot tasks run against a moving physical state: blocked paths, changing light, battery limits, sensor noise, and operator rules.

That is why the task loop needs more than isolated models. It needs a way to preserve context across planning, execution, and feedback.

1. Task Planning: Vague Instructions Produce Vague Execution

A phrase like “go take a look” hides a lot of decisions. Which area? What should the robot photograph? What counts as unusual? What should it do if the shot fails? What result should it return to the operator?

If the task layer cannot resolve those details into concrete fields — target area, inspection object, completion condition, failure policy, and return format — the task runs without direction from the start and never recovers context downstream.

2. Context Routing: The Right Data Reaches the Wrong Stage

The robot stack may already contain the right information, but task execution depends on retrieving it at the right stage.

The startup phase needs maps, area definitions, and operating rules. Mid-execution needs live sensor state. Exception handling needs similar cases from prior deployments. When those sources are mixed up, the system makes the right kind of decision with the wrong context.

When routing fails, startup pulls stale experience instead of area rules, exception handling cannot reach the cases it needs, and mid-execution gets yesterday’s map instead of live readings. Giving someone a dictionary does not help them write an essay. The data has to reach the right decision point, at the right stage, in the right form.

3. Data Feedback: Single-Pass Execution Does Not Improve

Without writeback, a robot can finish a run without improving the next one. A completed action still needs a quality check: is the image sharp enough, or should the robot reshoot? Is the path still clear, or should it detour? Is the battery above threshold, or should the task terminate?

A single-pass system has no mechanism for those calls. It executes, stops, and repeats the same failure next time.

How RoboBrain Closes the Robot Task Loop

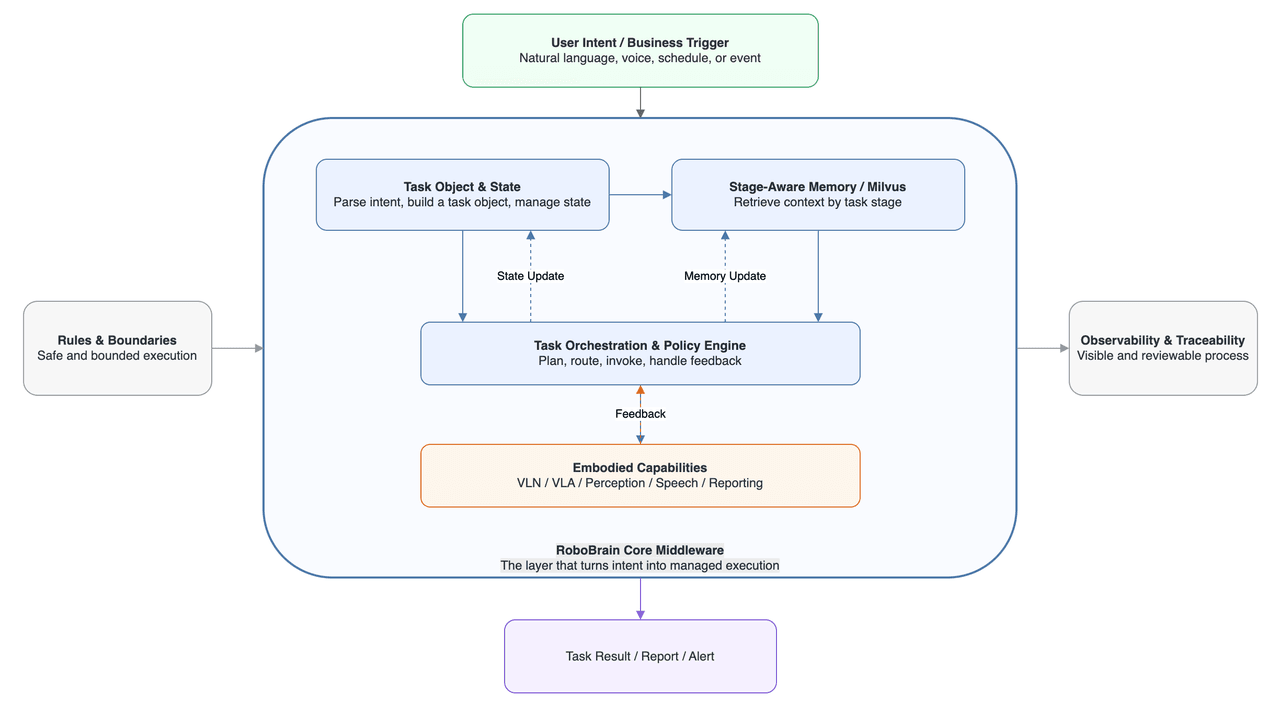

RoboBrain connects environment understanding, task planning, execution control, and data feedback into one operating loop.

RoboBrain core middleware architecture showing how user intent flows through task objects, stage-aware memory powered by Milvus, and a policy engine before reaching embodied capabilities

RoboBrain core middleware architecture showing how user intent flows through task objects, stage-aware memory powered by Milvus, and a policy engine before reaching embodied capabilities

In the architecture described in this contributed post, that loop is implemented through three mechanisms:

- Task objectification structures the entry point.

- Tiered memory routes the right information to the right stage.

- A feedback loop writes results back and decides the next move.

They only work as a set. Fix one without the others and the chain still breaks at the next point.

1. Task Objectification: Turning Intent into Shared State

Before execution starts, RoboBrain turns each instruction into a task object: task type, target area, inspection object, constraints, expected output, current stage, and failure policy.

The point is not just parsing language. The point is giving every downstream module the same stateful view of the task. Without that conversion, the task has no direction.

For the patrol example, the task object fills in the inspection type, designated zone, anomalous items as the check object, battery >= 20% as the constraint, a clear anomaly photo plus operator alert as the expected output, and return-to-base as the failure policy.

The stage field updates as the run changes. An obstacle moves the task from navigating to detouring or requesting help. A blurry image moves it from inspecting to reshooting. A low battery moves it to termination and return-to-base.

Downstream modules no longer receive isolated commands. They receive the current task stage, its constraints, and the reason the stage changed.

2. Tiered Memory: Routing Context to the Right Stage

RoboBrain splits task-relevant information into three tiers so the right data reaches the right stage.

Real-time state holds pose, battery, sensor readings, and environmental observations. It supports decisions at every control step.

Short-term context records events within the current task: the obstacle the robot avoided two minutes ago, the photo it reshot, or the door it failed to open on the first try. It keeps the system from losing track of what just happened.

Long-term semantic memory stores scene knowledge, historical experience, exception cases, and post-task writebacks. A particular parking area may require camera-angle adjustments at night because of reflective surfaces. A certain anomaly type may have a history of false positives and should trigger human review instead of an automatic alert.

This long-term tier runs on vector similarity search through the Milvus vector database, because retrieving the right memory means matching by meaning, not by ID or keyword. Scene descriptions and handling records are stored as vector embeddings and retrieved with approximate nearest neighbor search to find the closest semantic matches.

Startup pulls area rules and past patrol summaries from long-term memory. Mid-execution relies on real-time state and short-term context. Exception handling uses semantic search to find similar cases in long-term memory.

3. Feedback Loop: Writing Results Back into the System

RoboBrain writes navigation, perception, and action results back to the task object after each step, updating the stage field. The system reads those observations and decides the next move: detour if the path is unreachable, reshoot if the image is blurry, retry if the door will not open, or terminate if battery is low.

Execution becomes a cycle: execute, observe, adjust, execute again. The chain keeps adapting to environmental changes instead of cutting off the first time something unexpected shows up.

How Milvus Powers RoboBrain’s Long-Term Robot Memory

Some robot memory can be queried by task ID, timestamp, or session metadata. Long-term operational experience usually cannot.

The useful record is often the case that is semantically similar to the current scene, even if the task ID, location name, or wording is different. That makes it a vector database problem, and Milvus is a fit for the long-term memory tier.

This tier stores information such as:

- Area rule descriptions and point-location semantics

- Anomaly type definitions and example summaries

- Historical handling records and post-task review conclusions

- Patrol summaries written at task completion

- Experience writebacks after human takeover

- Failure causes and correction strategies from similar scenarios

None of that is naturally keyed by a structured field. All of it needs to be recalled by meaning.

A concrete example: the robot patrols a parking lot entrance at night. Glare from overhead lights makes anomaly detection unstable. Reflections keep getting flagged as anomalies.

The system needs to recall reshoot strategies that worked under strong nighttime glare, camera-angle corrections from similar areas, and human-review conclusions that marked earlier detections as false positives. An exact-match query can find a known task ID or time window. It cannot reliably surface “the prior glare case that behaved like this one” unless that relationship has already been labeled.

Semantic similarity is the retrieval pattern that works. Similarity metrics rank stored memories by relevance, while metadata filtering can narrow the search space by area, task type, or time window. In practice, this often becomes hybrid search: semantic matching for meaning, structured filters for operational constraints.

For implementation, the filter layer is often where semantic memory becomes operational. Milvus filter expressions define scalar constraints, while Milvus scalar queries support exact lookups when the system needs records by metadata rather than similarity.

This retrieval pattern resembles retrieval-augmented generation adapted for physical-world decision-making rather than text generation. The robot is not retrieving documents to answer a question; it is retrieving prior experience to choose the next safe action.

Not everything goes into Milvus. Task IDs, timestamps, and session metadata live in a relational database. Raw runtime logs live in a logging system. Each storage system handles the query pattern it is built for.

| Data Type | Where It Lives | How It Is Queried |

|---|---|---|

| Task IDs, timestamps, session metadata | Relational database | Exact lookups, joins |

| Raw runtime logs and event streams | Logging system | Full-text search, time-range filters |

| Scene rules, handling cases, experience writebacks | Milvus | Vector similarity search by meaning |

As tasks run and scenes accumulate, the long-term memory layer feeds downstream processes: sample curation for model fine-tuning, broader data analysis, and cross-deployment knowledge transfer. The memory compounds into a data asset that gives every future deployment a higher starting point.

What This Architecture Changes in Deployment

Task objectification, tiered memory, and the feedback loop turn RoboBrain’s task loop into a deployment pattern: each task preserves state, each exception can retrieve prior experience, and each run can improve the next one.

A robot patrolling a new building should not start from scratch if it has already handled similar lighting, obstacles, anomaly types, or operator rules elsewhere. That is what makes robot task execution more repeatable across scenes, and what makes long-term deployment costs easier to control.

For robotics teams, the deeper lesson is that memory is not just a storage layer. It is part of execution control. The system needs to know what it is doing, what just changed, what similar cases have happened before, and what should be written back for the next run.

Further Reading

If you are working on similar problems with robot memory, task execution, or semantic retrieval for embodied AI, these resources are useful next steps:

- Read the Milvus documentation or try the Milvus quickstart to see how vector search works in practice.

- Review the Milvus architecture overview if you are planning a production memory layer.

- Browse vector database use cases for more examples of semantic search in production systems.

- Join the Milvus community to ask questions and share what you are building.

- If you want managed Milvus instead of running your own infrastructure, learn more about Zilliz Cloud.

- Three Breakpoints in Robot Task Execution

- How RoboBrain Closes the Robot Task Loop

- How Milvus Powers RoboBrain's Long-Term Robot Memory

- What This Architecture Changes in Deployment

- Further Reading

On This Page

Try Managed Milvus for Free

Zilliz Cloud is hassle-free, powered by Milvus and 10x faster.

Get StartedLike the article? Spread the word